UNT College of Engineering researchers Qing Yang and Song Fu are working on ways to build safer autonomous vehicles by connecting them via multiple sensors through vehicle-to-vehicle (V2V) communication. It’s a move that could shift the way the automotive industry manufactures these vehicles.

Using two golf carts, Yang and Fu have placed sensors that include LiDAR, cameras, radar and deep learning software on both vehicles so they can communicate with one another.

“Most autonomous cars out now may only have limited number of sensors on them, but by connecting the vehicles and merging the data, you could in essence multiply the number of sensors collecting data and communicating with each other, extending the range and accuracy of the data,” said Yang, assistant professor of computer science and engineering.

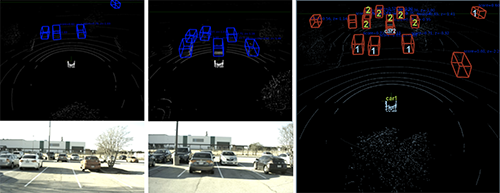

The NVIDIA DriveWorks deep learning software Yang and Fu are using not only connects the golf carts, but also makes it possible for the vehicles to collect, process and share data more accurately with one another. Most importantly, however, is its ability to fuse the data to create a clearer image of obstacles in an autonomous vehicle’s path.

“There are some objects that cannot be detected by either car, but when you fuse the data obtained from both cars, those objects could be detected. So, that means each car can detect part of the object but not be able to recognize it at all on their own,” said Yang.

“This is an issue with the current self-driving car produced by companies studying this issue,” added Fu, computer science and engineering associate professor. “They focus on one car so everything this car can see, that’s the decision it can make, but now we have situations that if you can merge the videos and LiDAR images from two cars, you can detect objects that cannot be detected by one car. With edge computing and vehicle-to-everything (V2X) communication, data from cars, traffic control devices, and even smart devices of pedestrians can be combined and processed in real-time for accurate perception and coordination.”

Yang and Fu are currently planning to take their tests to the next level, moving from the golf carts to actual autonomous vehicles.

“This setup we have here is a rather unique infrastructure that not many universities have,” said Fu. “As we move forward, we’re excited to see how our work can be integrated into the overall automotive industry.”